The week of April 13, two creative-AI launches landed back-to-back that, taken on their own, read as routine product news. On April 15, Adobe announced Firefly AI Assistant, a conversational “creative agent” that orchestrates work across Photoshop, Premiere, Lightroom, Illustrator, Express and the Firefly app from a single prompt; public beta in the coming weeks, no GA date, no pricing. A day later, Tel Aviv-based Deepdub introduced what it calls “the world’s first agentic dubbing co-worker”, an AI system embedded inside studio localization workflows that handles dialogue generation, character voice continuity, timeline markers and structured QC across more than 130 languages. Read those two together with WPP Media’s April 1 launch of YouTube 5K and Google’s March 2026 Demand Gen drop, which slid Veo’s image-to-video generation directly into the Google Ads creative builder, and the cluster stops looking like a coincidence of release calendars. Q1-Q2 2026 is the quarter agentic AI moved out of demos and into the production workflows that ship advertising and television.

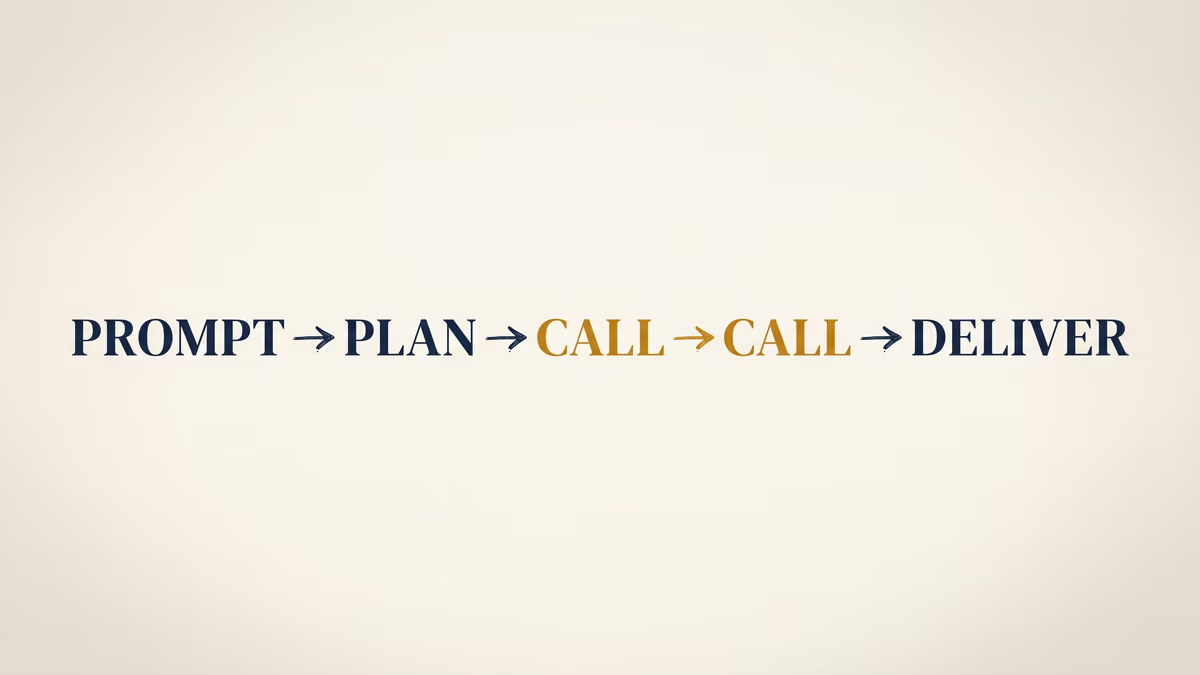

The shift the cluster names is structural. Where the 2023 cohort produced one asset per prompt, the 2026 cohort breaks a brief into a sequence of tool calls inside a professional environment and hands the output back in editable native formats the operator can keep working in. Adobe’s framing in the launch blog is that Firefly Assistant “orchestrates multi-step workflows” via a conversational interface, with pre-built Creative Skills, persistent context across sessions, Frame.io review hooks, and outputs that “remain editable in native Adobe formats.” Deepdub’s release describes a system that reads and modifies content at segment, character and track levels, then runs structured QC checks before export. CEO Ofir Krakowski’s framing is that the system “takes initiative alongside your team inside the very workflow where production happens.” The verbs are doing the work: orchestrate, take initiative, manage, validate.

The agency-side restructure has begun

The reason this matters more than the underlying model improvements is that the buy side is now productizing it. WPP Media’s YouTube 5K, launched at the start of April with Google as a co-development partner (single-source via VideoWeek; a direct WPP release is still pending), is the first time a Big Four holdco has shipped a packaged AI-creative product to clients that combines two layers: AI-driven channel curation across more than 5,000 YouTube channels for brand-suitability and risk, and AI-generated 10/15/20-second creative versioning evaluated against YouTube’s ABCD best practices. The single client data point WPP disclosed via VideoWeek, a 15% absolute brand lift on Subway, should be read with the usual vendor-trial caveats; one trial is not a benchmark. What is unambiguous is the org chart implied. WPP Media is offering its clients a single tooled service that does the brand-safety screening and the multi-format creative build, with the holdco as the operator of the agent rather than the operator of the buy.

That is also the framing Adobe applied to itself. The companion blog Adobe published the same day, The age of creative agents and the rise of the creative director, argues the agency role moves from tool operator to creative director of an agentic system. David Wadhwani, the President of Adobe’s Creativity & Productivity Business, put the position bluntly in Adobe’s launch release: “Adobe is leading the shift into a new era of agentic creativity, where you direct how your work takes shape and your perspective, voice and taste become the most powerful creative instruments of all.” Adobe also told TechCrunch, via VP of AI and Innovation Alexandru Costin, that the agent is meant to “remove some of the friction in learning this large catalog of tools we have.” Both statements describe the same restructure: the agent absorbs tool fluency, and the human moves up the stack toward direction. WPP’s and Adobe’s launches are the agency-side equivalent of what IAB Tech Lab’s Programmatic Governance Council launch and DoubleVerify’s first MRC TikTok viewability accreditation are doing on the supply and measurement sides: formalizing the connective tissue around an industry that already runs on machine-mediated decisions.

The funding rounds underneath the launches confirm the pivot is enterprise-anchored, not consumer-fueled. Synthesia closed a $200 million Series E in late January at a $4 billion valuation, roughly double its $2.1 billion mark a year earlier, led by Google Ventures with NVentures (Nvidia), Hedosophia, Accel, NEA and Air Street back in. CEO Victor Riparbelli framed the round in the company’s own primary release around what he called “a rare convergence of two major shifts: a technology shift with AI Agents becoming more capable, and a market shift where upskilling and internal knowledge sharing have become board-level priorities.” A separate Bloomberg-broadcast interview with Riparbelli the same day, reported by Bloomberg, is Bloomberg’s exclusive; any quotes from that interview belong to Bloomberg on every reference. TechCrunch corroborated the doubled valuation and the employee secondary structure, which Synthesia ran through Nasdaq Private Markets. Synthesia is the cleanest pure-enterprise read of the cohort: more than 90% of the Fortune 100 as customers per the company, no consumer-facing surface to defend.

The disclosure cliff is six weeks out

The second force pressing on this cluster is regulatory and it has a date on the calendar. The IAB published the industry’s first AI Transparency and Disclosure Framework in mid-January, voluntary and materiality-driven, scoped to synthetic images, video, audio, AI-generated voices of deceased and living persons, digital twins and conversational agents. IAB CEO David Cohen put the framing in the release: “While AI is transforming how we work from ideation to execution and measurement, we must get transparency and disclosure right, or we risk losing the trust that underpins the entire value exchange.” The framework references C2PA protocols for machine-readable provenance and notes EU and U.S. state laws but does not specifically name New York’s synthetic-performer disclosure law, signed by Governor Hochul in December and effective in June: the first state-level mandatory regime for AI-generated humans in commercial advertising. Civil penalties are modest on paper ($1,000 first violation, $5,000 each subsequent), and audio ads plus language-translation use are exempt; the operational weight is the disclosure-flow build, not the fine.

That timeline is six weeks from this week’s launches. Which makes the most interesting omission in Adobe’s Firefly Assistant launch blog the absence of any mention of Content Credentials or C2PA, the provenance-metadata standard Adobe co-founded and has championed since 2019. Adobe is among the most credentialed C2PA voices in the industry; Firefly’s image generations have carried Content Credentials since launch. Reading the blog without a prior on Adobe’s history, you would not know provenance was a concern of the company. Content Credentials remain wired into Firefly’s underlying outputs, so the omission reads as a framing choice rather than a strategy reversal. It is a notable framing choice during a launch week six weeks before a state mandate begins demanding exactly the disclosure flow Content Credentials would help instrument. Worth flagging without overreading.

Deepdub’s release shares a similar gap. The PR Newswire-distributed announcement names a Voice Actors Royalty Program in passing and emphasizes “human expertise and AI” working together, but does not specify the disclosure metadata or watermarking the dubbed output carries — relevant against a labor backdrop where SAG-AFTRA’s video-game agreement and the WGA’s 2023 contract have made consent, compensation and transparency the procurement-grade asks for any synthetic voice or likeness. Krakowski’s quote in the release leans into the human-in-the-loop framing: a co-worker that “understands the craft, knows the project, and takes initiative alongside your team.” The standards-body and statutory layer the industry has been building piece by piece since the strikes is now the operating environment any of these launches has to plug into to ship for a regulated buyer.

Vibe.co, the French CTV ad-tech company that closed a $50 million Series B at a $410 million valuation last September, offers the closest available number on adoption velocity, with the heavy caveat that it is a vendor-platform projection. The company says 10% of ads running on its platform today use AI-generated creative, and it predicts that figure will reach 30% by the end of 2026. That is Vibe’s projection on Vibe’s surface, not a market-wide forecast — the writer, the slate, and downstream coverage all need to keep the qualifier attached. The directional read is what is interesting: a CTV-buy-side platform is forecasting a tripling of AI-creative penetration on its own inventory inside eight months, ahead of the New York mandate going live, and during the same NewFronts season the buy side is closing 2026-27 deals.

What to read against this

The cohort has a negative case study running underneath it. OpenAI’s Sora consumer app shut down on the schedule announced in late March, with the API set to follow in September, after Disney walked away from a planned $1 billion investment and Studio Ghibli’s parent group CODA pressed OpenAI on training-data use. TechCrunch’s reporting on the shutdown attributed the decision to compute economics ($1 million per day burn), engagement collapse, copyright friction and IPO-prep refocus. The contrast against Synthesia’s $150 million ARR, Adobe’s embedded Creative Cloud distribution, and Deepdub’s enterprise-pricing model is the structural one: agentic-creative-in-production gets paid for as productivity inside a workflow customers already have a P&L for. Consumer text-to-video has been paid for as novelty against a content-rights regime that has not yet stabilized.

Two specific calendars name the next reads. The first is the run-up to the New York law’s June effective date. Watch for whether the IAB updates its voluntary framework with NY-specific operational guidance, whether the major holdcos publish disclosure SOPs that align with both the IAB framework and the NY statute, and whether any agentic-creative product (Firefly Assistant, the Deepdub Co-Worker, YouTube 5K) ships its first NY-compliant disclosure surface. The second is the upfronts and NewFronts deal-close window through May and June: every CTV buyer signing 2026-27 commitments has to operationalize provenance before close, and the cluster of agentic-creative tooling now sitting inside professional workflows is the layer those disclosure flows will run through. The story that runs in parallel to this one — New York’s synthetic-performer disclosure law itself — is the regulatory clock these product launches will be measured against.